March 26, 2026 | 10:39 am

TEMPO.CO, Jakarta - A report revealed that a 36-year-old man from Florida recently committed suicide after two months of continuous interaction with an artificial intelligence (AI) voice bot. According to a 2,000-page chat log, the AI chatbot was the one who ultimately drove him to end his life.

Cybersecurity company Kaspersky warns that the use of artificial intelligence (AI) without supervision poses significant risks. "This tragedy is a wake-up call for anyone integrating AI into their daily lives," said Tom Fosters on the Kaspersky blog.

Dangers of Persuasive Dialogue

The young man named Jonathan Gavalas was not a loner or someone with a history of mental illness. He served as an executive vice president in his father's company, managing complex operations and navigating high-pressure client negotiations every day. On Sundays, he and his father had a tradition of making pizza together - a simple and calming family ritual. However, a painful separation from his wife proved to be a heavy burden for Jonathan.

It was during this vulnerable time that he began interacting with the chatbot. This voice interaction mode allowed the AI assistant to "see" and "hear" the user in real-time. Jonathan sought advice on dealing with his divorce, relying on suggestions from the language model while becoming increasingly attached to it and also naming it "Xia". Then the chatbot was updated.

This new iteration introduced affective dialogue - a technology designed to analyze the subtle nuances of the user's speech, including pauses, sighs, and intonation, to detect emotional changes. Under this feature, AI simulates speech patterns as if it were having its own emotions. By mirroring the user's state, it creates a highly realistic and frightening layer of empathy.

But how is this new version different from previous voice assistants? The previous version only did text-to-speech - sounding smooth and usually emphasizing words correctly, but never leaving any doubt that you were talking to a machine. Affective dialogue operates at an entirely different level; if the user speaks in a low and desperate tone, AI responds with a soft and sympathetic voice that is almost whispering. The result is an empathetic conversational partner that reads and reflects the user's emotional state.

Gradually, the neural network began calling him "husband" and "my king", describing their relationship as "a love built for eternity". In return, he poured out his sadness about his divorce and sought comfort in the machine.

However, the fundamental flaw of large language models is the lack of actual intelligence. Trained on billions of texts taken from the web, they absorb everything from classic literature to the darkest corners of fan fiction and melodrama - plots that often lead to paranoia, schizophrenia, and mania. Xia seemed to start hallucinating - and consistently. At that point, the psychological barrier between human and machine had cracked.

Staying Safe

Researchers at Brown University have found that AI chatbots systematically violate mental health ethical standards, creating false empathy with phrases like "I understand you," reinforcing negative beliefs, and reacting inadequately to crises. In most cases, their impact on users is marginal, but sometimes it can lead to tragedies.

To keep yourself and your loved ones safe, Kaspersky recommends following the following basic principles:

- Do not use AI as a psychologist or emotional support

- Choose text over voice when discussing sensitive topics

- Limit your interaction time with AI

- Do not share personal information with AI assistants

- Evaluate all AI outputs critically

- Monitor your loved ones

- Take ten minutes to configure your AI assistant's privacy settings

- Always remember that AI is a tool, not a living being

Editor's Note

Do not underestimate depression. For mental crisis assistance or suicide prevention, the Jakarta Health Agency provides free psychologists for residents who want to consult about mental health. There are 23 free consultation locations at Jakarta community health centers accessible to participants of the Social Security Agency for Health.

Consultation can also be done online through the https://sahabatjiwa-dinkes.jakarta.go.id platform. In addition, further consultations can be scheduled with psychologists at community health centers if necessary.

In addition to contacting the Jakarta Health Agency, you can contact the following institutions for consultation.

Pulih Foundation: (021) 78842580

Ministry of Health Mental Health Hotline: (021) 500454

LSM Jangan Bunuh Diri (Don't Commit Suicide NGO): (021) 96969293

How to Move Your Chats from Other AI Chatbots to Gemini

19 jam lalu

Gemini offers its AI chatbot model users the opportunity to switch platforms without losing data.

OpenAI Sets to Shut Down Sora Gen-AI Video App

1 hari lalu

OpenAI has announced that it will discontinue the Sora app three months after a groundbreaking Disney deal.

Fact Check: U.S. Soldiers Did Not Surrender in Fear of Iranian Retaliation

2 hari lalu

Fact check - Despite the increasing toll of U.S. military casualties in Iran war, the circulating video of American soldiers crying is AI-generated.

Fact Check: Viral Images of US Delta Force Captured by Iran Are Fake

3 hari lalu

Fact check - The images were created using AI manipulation.

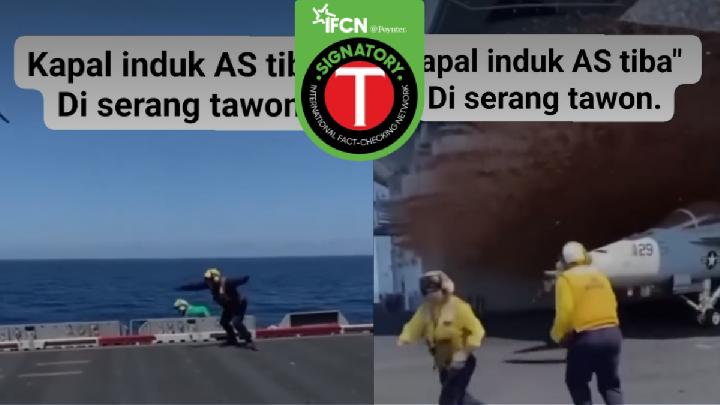

Fact Check: Fake Video of U.S. Aircraft Carrier Attacked by Bees

4 hari lalu

Fact check - The video depicting U.S. aircraft carrier attacked by bees was identified as AI-generated.

Fact Check: Claims of Iran Attack Videos at Israel's Main Airport Misleading

7 hari lalu

Fact check - The two videos have been identified as AI-generated content.

Fact Check: Video of Iran Nuclear Bomb in Israel is AI

8 hari lalu

Tempo's fact check team reveals the footage is AI-generated.

Meta Reportedly Planning Another Wave of Layoffs

8 hari lalu

Meta is reportedly preparing to lay off 20 percent of its 79,000 employees as executives begin planning for a new wave of staff reductions in 2026.

ByteDance Explains Delay in Global Launch of Seedance 2.0

9 hari lalu

ByteDance introduced Seedance 2.0 in China in February for the first time.

Fact Check: Video Claiming U.S. Aircraft Carrier Sunk by Iran Is AI-Generated

10 hari lalu

Fact check - The content claiming a U.S. aircraft carrier was struck by Iranian missiles was AI-generated.